Watch this video. It’s real and it’s scary. By voice cloning, criminals can talk money out of terrified relatives who often lose the ability to be rational.

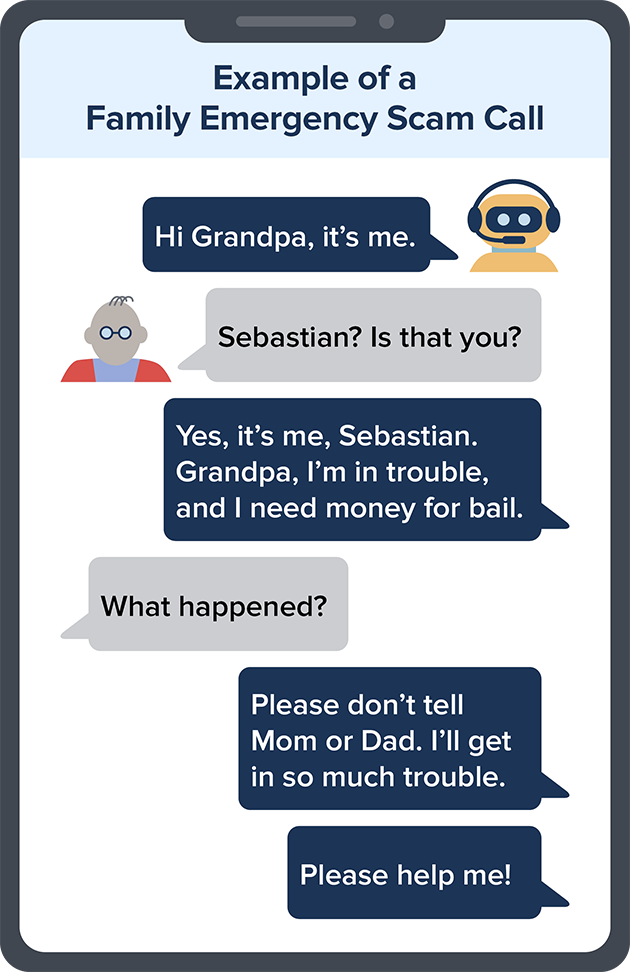

A scammer could use AI to clone the voice of your loved one. All s/he needs is a short audio clip of your family member’s voice — which s/he could get from content posted online — and a voice-cloning program. When the scammer calls you, s/he’ll sound just like your loved one.

Using deep-learning algorithms, audio editing and engineering, and synthetic voice generation it is increasingly possible to convincingly simulate a person’s voice. And now, chatbots like ChatGPT are starting to generate realistic scripts with adaptive real-time responses.(The Conversation) By combining these technologies with voice generation, a deepfake goes from being a static recording to a live, lifelike avatar that can convincingly have a phone conversation. This is what happened in the video below.

@payton.bock AI VOICE CLONING SCAM PSA!!!!! #fyp #ai #scam #voicecloning ♬ original sound – PAYTON

From the UK spectator magazine comes this story:

A few weeks ago a friend received a phone call from her son, who lives in another part of the country. ‘Mum, I’ve had an accident,’ said the son’s voice. She could hear how upset he was. Her heart began to pound. ‘Are you OK? What happened?’ she said.

‘I’m so sorry Mum, it wasn’t my fault, I swear!’ The son explained that a lady driver had jumped a red light in front of him and he’d hit her. She was pregnant, he said, and he’d been arrested. Could she come up with the money needed for bail?

It was at this point that my friend, though scared and shocked, felt a prick of suspicion. ‘OK, but where are you? Which police station?’

The call cut off. Instead of waiting for her son to ring back, she dialled his mobile number: ‘Where are you being held?’ But this time she was speaking to her real son, not a fake of his voice generated by artificial intelligence. He was at home, all fine and dandy, and not in a police station at all.

In another instance from the same article an elderly Canadian couple paid up. But even when she knew it as a scam, she still couldn’t shake the feeling that she’d spoken to her son. The sound of him went straight to her heart. You respond instinctively and emotionally.

What do you do?

So how can you tell if a family member is in trouble or if it’s a scammer using a cloned voice?

Don’t trust the voice. Call the person who supposedly contacted you and verify the story. Use a phone number you know is theirs. If you can’t reach your loved one, try to get in touch with them through another family member or their friends.

Scammers ask you to pay or send money in ways that make it hard to get your money back. If the caller says to wire money, send cryptocurrency, or buy gift cardsand give them the card numbers and PINs, those could be signs of a scam.

If you spot a scam, report it to the FTC at ReportFraud.ftc.gov.